Browser-based detection keeps your sensitive data local, meaning you decide which data to monitor and send to Nudge Security.

On top of full conversation details, we also track file uploads and copy/paste actions from SaaS source to AI chatbot tool so you know how data is being shared.

Custom alerts notify your team the moment sensitive data is detected. Route notifications to your preferred channel, and filter alerts to reduce noise.

Common questions about Nudge Security's AI conversation monitoring feature

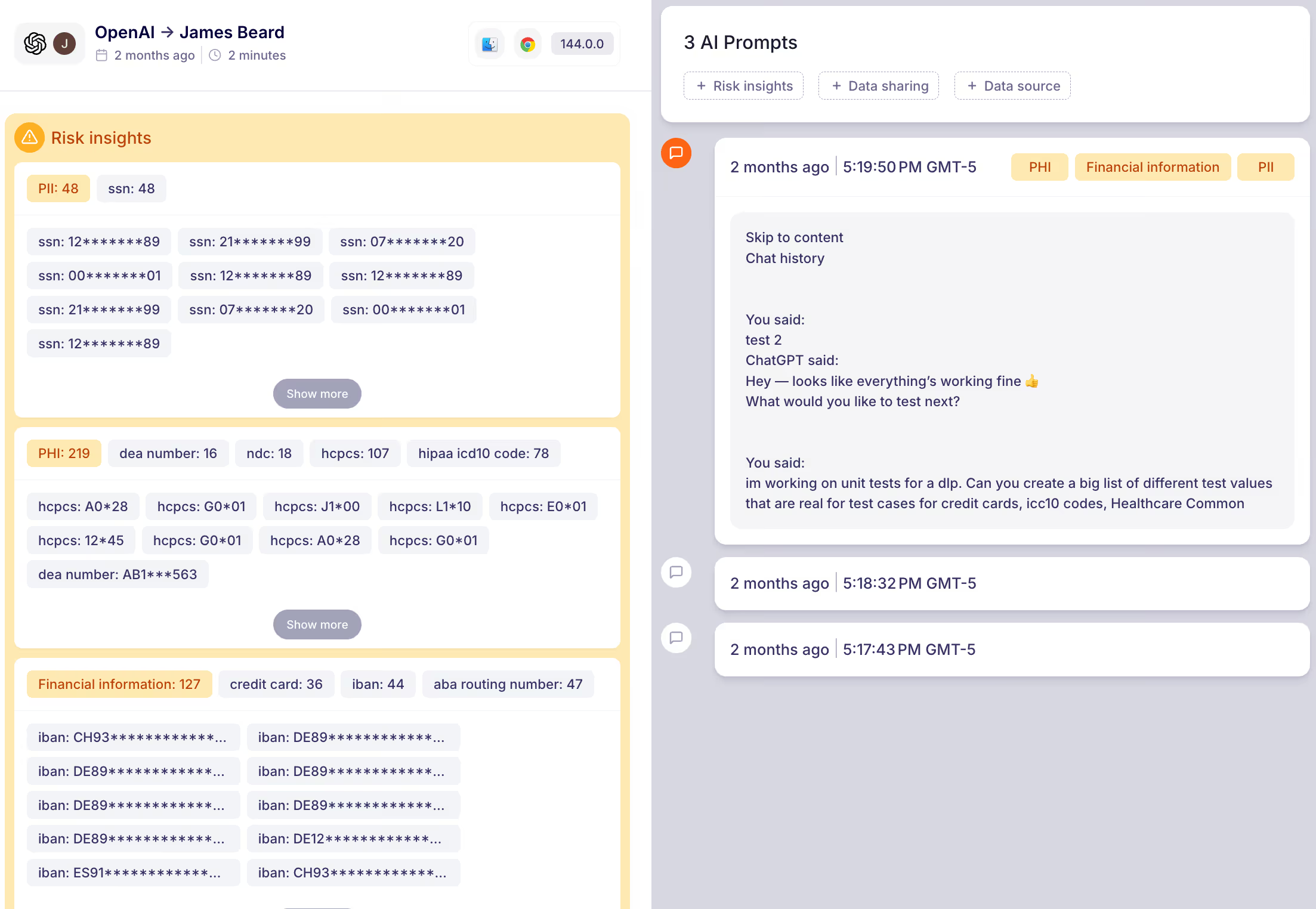

AI conversation monitoring is the practice of detecting and tracking sensitive data that employees share with AI chatbot tools like ChatGPT, Claude, Gemini, and others. Nudge Security's browser-based feature monitors AI conversations in real time and alerts your security team whenever sensitive data—like credentials, PII, PHI, or financial information—is uploaded or pasted into an AI tool.

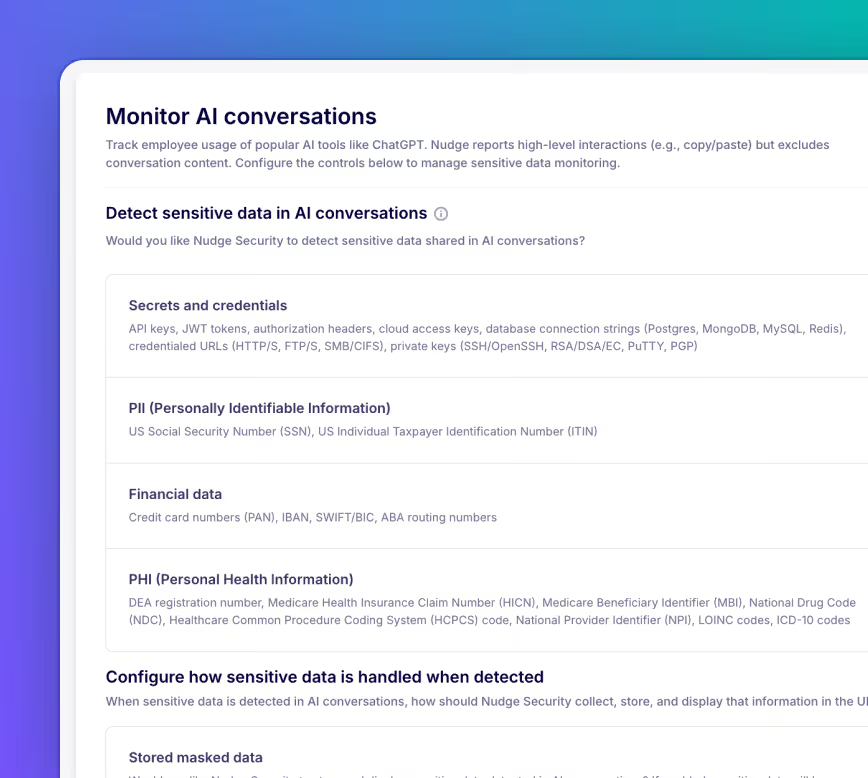

Detection happens directly in the browser, which means sensitive data is identified locally before it's evaluated and flagged. You control what types of data are monitored—secrets and credentials, personally identifiable information, protected health information, financial data, and more—so you can tailor coverage to your organization's risk profile.

Nudge Security monitors the AI chatbot tools your workforce is most likely to use, including ChatGPT, Google Gemini, Claude, and more. We're actively expanding support for additional AI tools as the market evolves.

No. Because monitoring happens at the browser level, you get complete AI governance without the limitations—or the deployment complexity—of an AI gateway. There's no need to route traffic through a proxy or reconfigure your network.

No. Monitoring only activates on supported AI tools when the feature is enabled, and only for the data types you configure. It's not a keylogger—it's purpose-built to catch sensitive data flowing into AI tools.

You choose. Nudge Security can mask or store detected data based on your configuration. Alerts are routed to your team via Slack, email, Microsoft Teams, or webhook. You can also access conversation data via API to feed it into your existing incident response workflows.

Yes. Nudge Security tracks file uploads and copy/paste actions from SaaS source to AI tool, so you can map high-risk data flows and understand the full blast radius of what's been shared—including file name, source, size, and type.

Most AI security approaches require employees to use a managed browser, a data loss prevention agent, or a network-level proxy. Nudge Security's browser extension works without any of those constraints, giving you visibility into AI conversations across your workforce without adding friction or requiring a rip-and-replace of your existing security stack.